Background

For those of you who were not aware of it’s existence, I compiled a “consensus big board” for the 2021 NFL Draft. Rather than looking at a big board from a single source, this “consensus board” calculated the average big board ranking from 14 of the biggest names, which included the following:

- ESPN

- Brugler

- PFN

- Mock Draft DB

- NFL.com

- Matt Miller

- PFF

- CBS

- Draft Diamonds

- Bleacher Report

- TDN

- DraftTek

- FanSpeak

- 4for4

The resulting product was the following distribution visualization and the consensus ranking of each draft prospect:

There were a few shocking surprises throughout the draft, with the Cleveland Browns getting the “steal of the draft” in Jeremiah Owusu-Koramoah. His consensus ranking among the 14 big boards was #13 overall and fell all the way to #52. On the other hand, Payton Turner might be considered the biggest reach for the New Orleans Saints. His consensus ranking among the 14 big boards was #71 and was selected at #28. There’s a bunch of different charts and graphs analyzing the draft and broken down by position, college and value available in the Tableau sheet.

With the main event in the books, I wanted to dig a little deeper and analyze the analysis (?) and see which individual big boards performed the best at “predicting” the draft. But before diving right in, a word to the wise is sufficient.

Full disclosure: I am fully aware that big boards != mock drafts and do not take into consideration team needs, trades, etc., etc. This isn’t about which big board author was right or wrong, because it’s nearly impossible to evaluate with the fluid nature of the draft. In other words, this isn’t about which big boards were the best compared to actual draft results, but rather which big boards were the best when compared to one another.

Methodology

A quick note on methodology on “accuracy scores”: In order to measure “accuracy” or some semblance of it, I calculated the difference between the individual big board ranking and draft position of each player. The difference was calculated from the absolute value between big board and draft position. For instance, if a player was drafted 10th and had a big board rank of 14, the value would be 4. The same would be true if a player was drafted 14th with a big board rank of 10.

Even the best big boards incorrectly ranked players to the average tune of about 30 spots from where they were actually drafted. That’s a huge variance for a draft with only 260 total draft picks. However, most of this variation is in rounds 4-7, where the gap between prospects is much smaller than in the first round and the difference between a player ranked 200 and 300 becomes more negligible.

Then using this number, we can look at each round (or multiple rounds) and determine how closely the big board resembled the order of draft selections. Finally, each board is scaled from 0-100, because who doesn’t love a simple grading scale (thanks PFF!). A perfect score of 100 means that the big board had the exact same ranking as the actual draft order, which as we know is nearly impossible. A score of 0 means that the big board didn’t have any of the players drafted on their board at all. A score of 50 means that the average gap between big board ranking and actual draft position is 50 spots.

Analysis

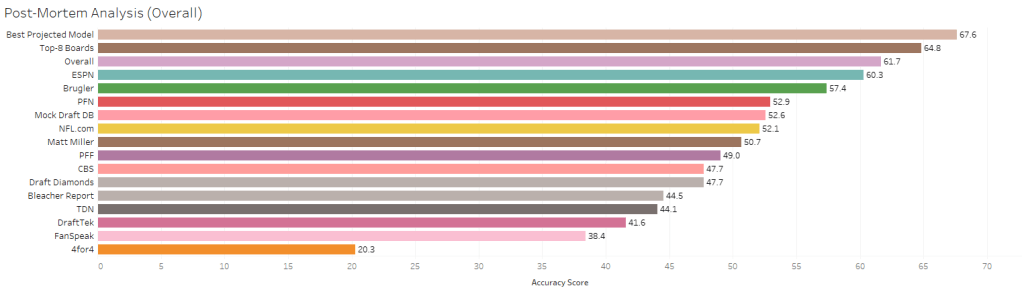

Now that all that nerd talk is finished, here is the full 7-round accuracy of each board:

The 60.3 overall accuracy score for ESPN means that the average gap between a players’ big board rank and actual draft position is about 40 spots (which is almost a whole draft round!). To be fair, this is about comparing the performance of boards to one another, not to the actual draft. ESPN was still more accurate in their rankings than any other single board and about 3 times more accurate than 4for4. As previously mentioned, the overall poor showing has more to do with the variation late in the draft than poor big board rankings. If you were wondering about the “Best Projected Model”, “Top-8 Boards” and “Overall” that scored even better than ESPN, those are the consensus boards I created. The fact they performed better than any individual board validates that there is some utility in a consensus ranking. More on that later…

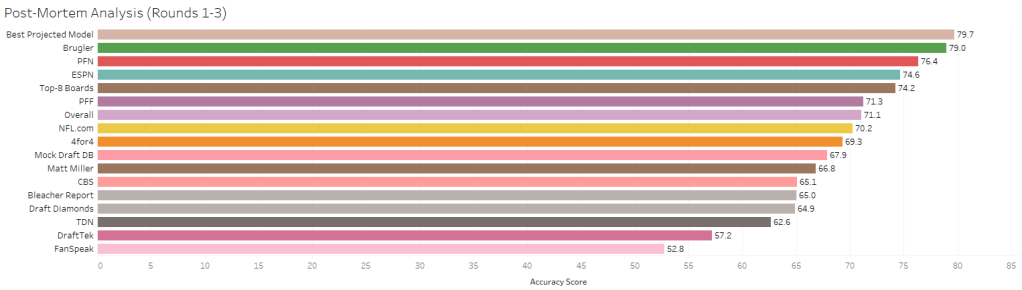

If we try to remove some of the noise in rounds 4-7, this is the data filtered the first three rounds of the draft:

Looking at the first three rounds, the Brugler big board jumps to the top of the list with a score of 79, which means the average error is down to 21 spots. This amounts to an average of ~2 spot accuracy improvement over PFN and ESPN, but a massive ~26 spot accuracy improvement over FanSpeak.

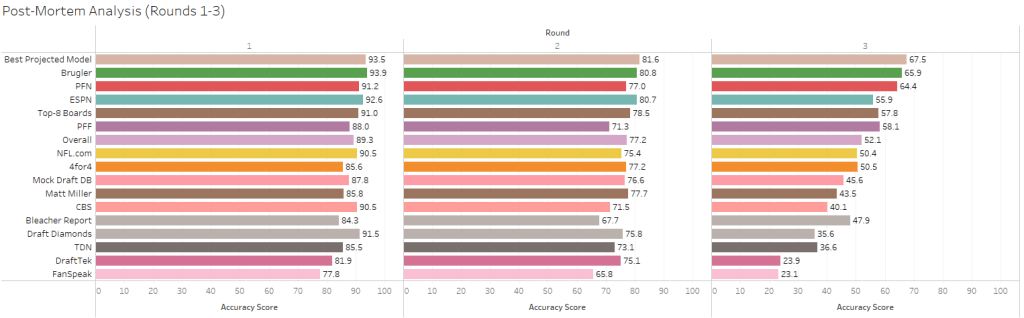

Things look even more impressive when looking at each round individually:

All of the boards performed better in the first round than rounds 2 or 3, which makes sense because it’s easier to predict draft position earlier in the draft. However, what is more interesting is that some boards performed much better relative to the others in different rounds. For instance, ESPN performed very well in rounds 1 and 2 (2nd only to Brugler), but fell to 5th in predicting the 3rd round. Likewise, PFN was the 4th best board in the first round, the 7th in the second and the 2nd in the 3rd. The only consistency across rounds was Brugler’s dominance in each round and FanSpeak being graded as the lowest across the board.

The 2022 NFL Draft

This brings me to the 2022 NFL Draft, which will also feature my consensus big board. Last year I did two different consensus boards: (1) average across all boards and (2) the top-8 boards. The top-8 boards only included the ones I believed were most reliable, but now my hunches can be verified using data. The top-8 boards included Bleacher Report, Brugler, CBS, ESPN, NFL.com, PFF, PFN and TDN. Just like in the Premier League, I’m going to be relegating some of these boards out of the top list (especially TDN and Bleacher Report). But the fun isn’t going to stop there – I’m going to be developing a weighted model of boards that will *hopefully* be more accurate than a simple average. You can see what the results of this would look like when applied to last years’ data.

The “Best Projected Model” was the top performing big board in rounds 2 and 3, while coming in second behind Brugler in the first round. It performed better throughout all 7 rounds than any other board and was the best for rounds 1 through 3 combined. I’ll be putting this to the test in 2022 and will continue to tweak the model as necessary.

Thanks for staying tuned and interacting with the viz!